Cloud & Backend EP 3 - How Load Balancers Work?

The key to cloud infrastructure

Hello and welcome to a new edition of the Progressive Coder newsletter.

The topic for today - How Load Balancers Work?

Load balancers are one of the most important pieces of cloud infrastructure.

Without load balancers, cloud servers would be like waiters trying to serve an entire restaurant by themselves.

Sure, it's possible, but the end result won't be pretty.

Here’s another lame joke about load balancers.

In today’s post, I explain how load balancers work and what makes them so special.

Load balancers are a type of hardware or software tool used to distribute incoming network traffic across multiple servers.

🤔 But why do we need to distribute the traffic?

High-traffic websites serving thousands or millions of concurrent requests from users cannot rely on a single machine. Such websites typically go for horizontal scaling which means spreading the workload across several cheaper machines.

However, adding machines is one part of the equation.

🤔 How do you ensure that all machines get some traffic and that no single machine gets overburdened?

The answer is to use a load balancer.

A load balancer is like a traffic cop 👮 that sits between clients (such as web browsers or mobile apps) and application servers that host web applications, databases or other services.

When a client makes a request, the load balancer routes the traffic to one of the available servers. It also ensures that no single server is overloaded while others remain underutilized.

🤔 But how do load balancers choose which request should go to which server instance?

👉 Load Balancing Algorithms

Depending on the implementation, a load balancer can use various algorithms to make the choice.

Broadly, these algorithms are divided into two categories:

Static Algorithms

👉 Round Robin

Requests are distributed sequentially across a group of servers. The main assumption is that the service is stateless because there is no guarantee that requests from the same user will reach the same instance.

👉 Sticky Round Robin

This is a better alternative to round-robin since different requests from the same user go to the same instance. Depending on your situation, this could be a desirable quality

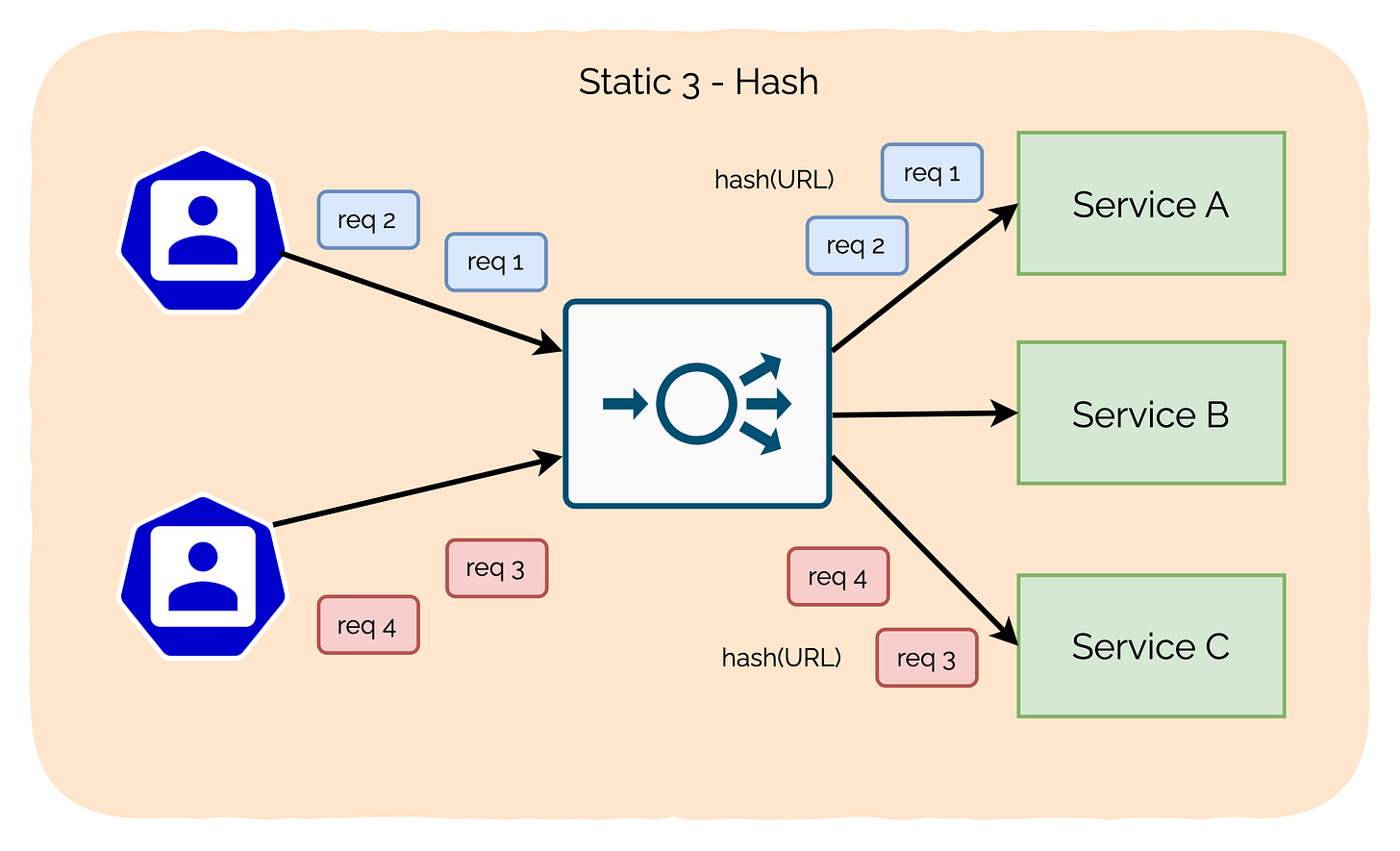

👉 Hash

This algorithm distributes requests based on the hash of a key value.

The key can be the IP address or the URL of the request.

👉 Weighted Round Robin

Each server gets a weight value. This value determines the proportion of traffic that will be directed to that particular server.

Servers with higher weights receive a larger share of the traffic, while servers with lower weights receive a smaller share.

For example, if Server A has a weight of 4 and Server B has a weight of 2, Server A will receive twice as much traffic as Server B.

This is a useful approach when different servers have different capacity levels and you want to assign traffic based on capacity.

Dynamic Algorithms

👉 Least Connections

In this algorithm, a new request is sent to the server instance with the least number of connections.

Of course, the number of connections is determined based on the relative computing capacity of a particular server.

👉 Least Response Time

In this algorithm, the load balancer assigns incoming requests to the server with the lowest response time in order to minimize the overall response time of the system.

Good for cases where response time is critical.

👉 Is Load Balancing Worth It?

Load balancing is extremely important for cloud-native solutions.

Few reasons:

You can’t have scalability without a load balancer distributing the traffic between multiple instances.

You can’t have high availability in the absence of a load balancer making sure that a request is routed to the best possible instance.

Load balancers also provide an additional layer of security by protecting against DDoS attacks.

👉 Over to you?

Have you used load balancing in your applications?

If yes, which algorithms did you use?

Write your replies in the comments section.

If you found today’s post useful, consider sharing it with friends and colleagues.