How to Handle Disastrous Duplicate Events in Your App?

Cloud & Backend EP 8 - Not handling them can cost you!

Don’t ignore duplicate events in your application!

If not taken care of, they can wreck your system and result in catastrophic situations.

As a backend developer, it is your responsibility 👮 to design a system that can deal with duplicate events.

Hello 👋 and welcome to a new edition of the Cloud & Backend newsletter.

In this post, I discuss why duplicate events occur and how you can handle them efficiently.

To support Progressive Coder, consider subscribing if you haven’t already done so.

Why do Duplicate Events occur?

Typically, we don’t want to miss events because events are often tied to business-critical operations.

Think of important events such as a customer doing a bank account transaction or placing an order on an e-commerce platform.

Who wants to miss a critical event related to money and risk loss of business or reputation? 💰

But there is a double-edged sword over here.

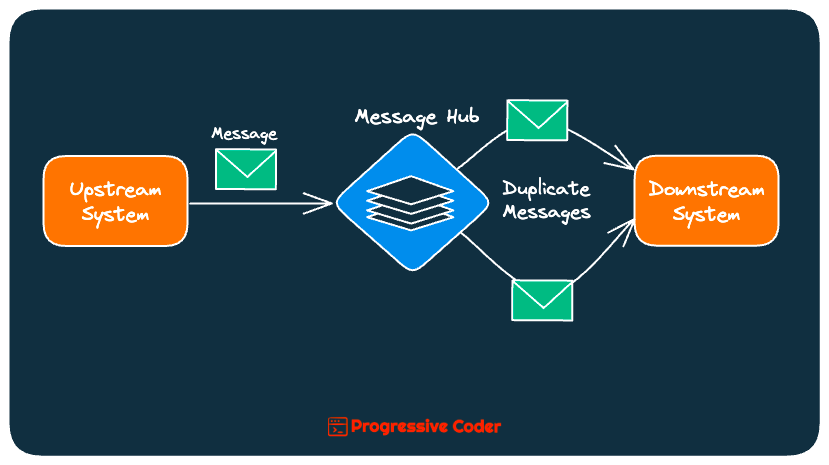

Since we don’t want to miss an event, we usually go for an at-least-once delivery guarantee in our messaging systems to ensure that each message is delivered at least once.

However, it does not mean the message can’t be delivered more than once.

If a timeout occurs and we aren’t sure whether the message got delivered, we send it again at the risk of creating a duplicate.

Ideally, we want the downstream system to ignore the message if it is a duplicate and has already been processed earlier.

Let’s look at how to do so.

🤖 Approach#1 - Naturally Idempotent Operations

An idempotent operation is an operation that can be repeated multiple times without changing the result.

Such operations make it easy to deal with duplicate events.

For example, updating account information such as names or phone numbers is a naturally idempotent operation. If you want to set the customer’s phone number to some value, you can do so as many times as you want. The result will always be the same.

👉 As a developer, try to build small operations that are naturally idempotent and don’t create a bunch of side effects. It will solve a lot of issues automatically.

But not all operations are naturally idempotent.

You cannot apply the same withdrawal transaction on a bank account multiple times without actually withdrawing the money as many times.

Similarly, you cannot place the same order multiple times without actually placing the order as many times.

The point is that such operations require a mechanism to check whether they are duplicates or otherwise.

🕹️ Approach#2 - Make your Operation Idempotent

This brings me to the next step where you must actively strive to make your operations idempotent.

And how can you do so?

Well, to keep things simple, you need to find a way to determine if a particular event is new or duplicated. If it is new, you process it. Else, you ignore it.

There are a couple of ways you can do it.

👉 Using Monotonically Increasing Numbers

This is by far the simplest approach.

When you handle an event, you can extract the id from the event data. Then, you can use that id as the primary key of the table that stores the events.

If you try to insert an event with the same id twice, you get a duplicate key exception. This means you have encountered a duplicate event and can safely ignore it.

Of course, simple things lead to complicated issues. ⚖️

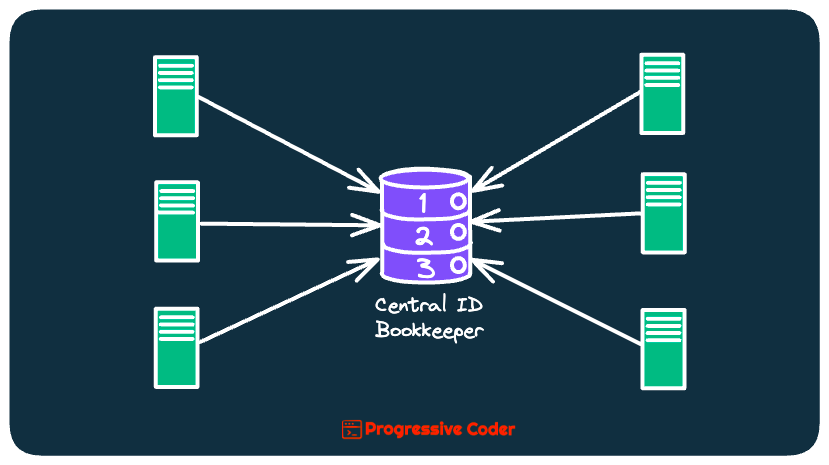

In a distributed system with multiple instances of a service, you need a central registry to keep track of the current number and assign the next one.

This central service acts as a single source of truth when it comes to determining the id.

But it can also become a single point of failure and is not a scalable solution in the case of a distributed system with multiple instances.

👉 Using UUIDs

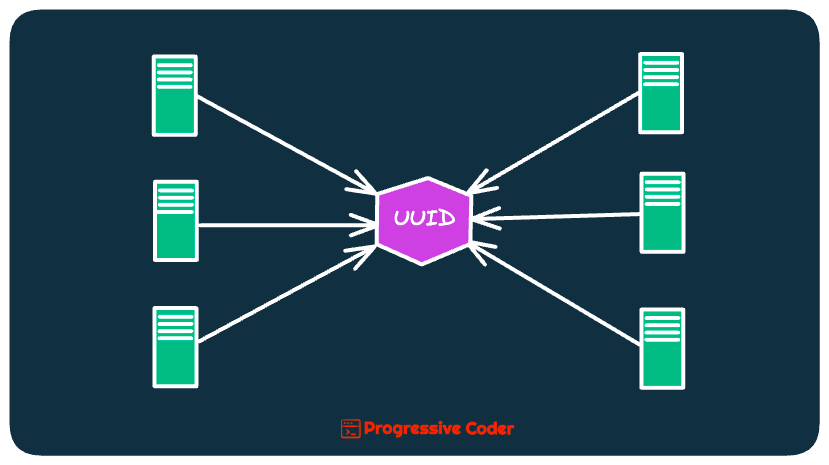

The second approach to identifying duplicate events is to use a UUID instead of a monotonically increasing number as the id.

UUIDs are guaranteed to be more or less unique when created with a high degree of randomness and unpredictability. Typically, you might see a collision after generating a trillion IDs.

These unique UUIDs allow us to identify duplicates easily while also avoiding the pitfalls of a centralized service.

Here’s what it looks like in practice for a distributed system where all service instances generate UUID using some sort of utility.

🕹️ Over to you

Have you found the need to deal with duplicate events or records in your system?

If yes, which techniques do you use?

Write your answers in the comments section below.

That’s it for today!

If you are finding this newsletter valuable, consider doing any or both of these:

👉 Support the Newsletter - if you haven’t done so already, consider becoming a paid subscriber.

👉 Share it - Progressive Coder grows thanks to word of mouth. Share the article with your friends or colleagues to whom it might be useful!

Wishing you a great week ahead! ☀️

See you later.

Ok I see. Now have a different duplidate case to discuss.

Suppose we have a service which reads from a kafka topic and then forwards the event to another topic.

Now, just after reading the event from the topic and after forwarding it to the next topic this service goes down and don't commit/acknowledge the forwarding. In this case, it will read the same message once more and do the operation again creating a duplicate. How should we handle this scenario? Add a db in between to keep track of the events being processed to be able to track the duplicates?

Did not get idea with UUID, can you give some example please. Thanks